Web Control Panel – Building the Web Server – Raspberry Pi Pico, ESP32, Arduino

1st April 2023

Native DOS Gaming with Sound – FreeDOS + SBEMU on a portable USB drive

9th May 2023Web Control Panel – Non Blocking Web Server Using Asyncio and Dual Cores – Raspberry Pi Pico, ESP32, Arduino

In our previous tutorial we developed a MicroPython Web server which was able to receive an HTTP request, decode it, handle any actions required, and prepare an HTTP response which could then be sent back to the client. This software ran as a single process on a single core within our microcontroller. This allows us to build a full web control panel for our project so that we can control and monitor our circuit remotely using a web browser.

But we identified that there was a major weakness in our code.

Once we had connected our socket to the HTTP port we could accept a connection using the accept method on our socket object. But this method is what’s known as a blocking method. Once we execute the command our code will sit waiting at this instruction until an HTTP request has been received. While we are waiting for this request our processor core will sit idle and no other lines of our code will be executed.

If our project is simply monitoring for example a range of sensors then this might not be an issue. We only need to take sensor readings when requested through an API call.

But if a project requires timing sensitive operations, such as running a motor or detecting collisions, then this blocking code will simply not work.

So in this tutorial we’ll be developing two techniques which will allow us to run our Web server code in parallel with our main control software.

The Test Circuit

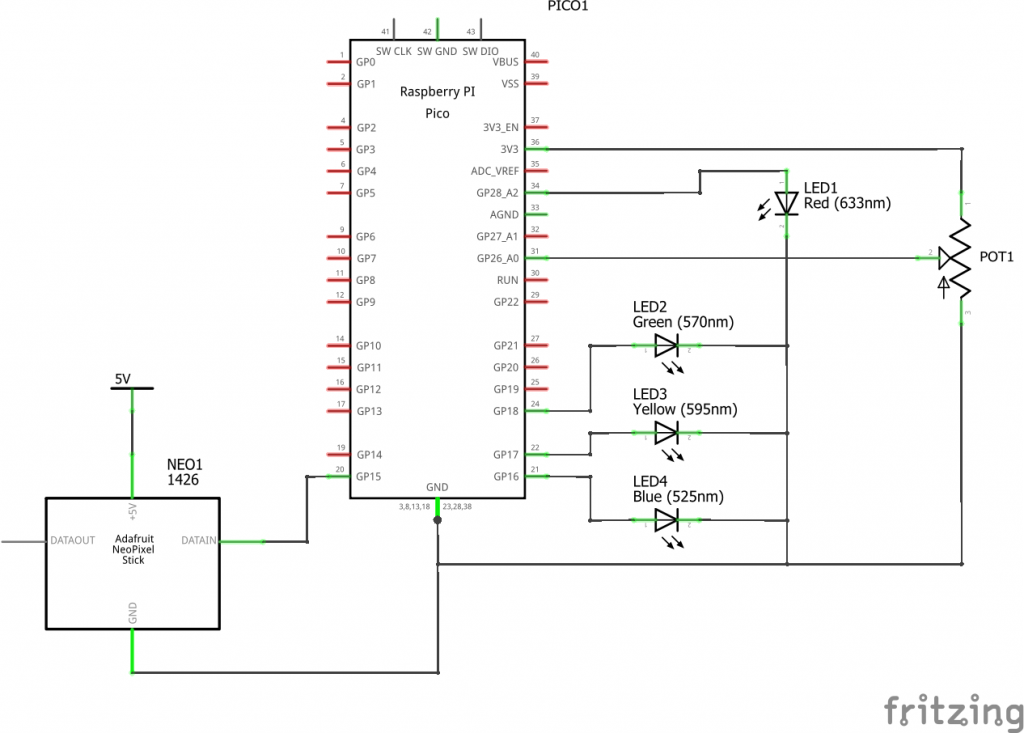

To show that our software is running I’ve put together a simple test circuit that includes a number of inputs and outputs.

In the circuit I’ve added a potentiometer, a number of LEDs and a neopixel stick with eight RGB LEDs.

We’ll be using the potentiometer and the inbuilt temperature sensor on the Raspberry Pi Pico to display gauge readings on our control panel. We’ll have buttons on our control panel that will allow us to turn on and off the blue, yellow and green LEDs. The single red LED will be pulsed on and off by internal control software running on our Pi Pico. The rightmost four pixels on the neopixel strip will run a continuous stream of random colours, again under direct control of the Pi Pico. The leftmost four pixels will be controlled by red, green and blue slider controls on our web control panel.

Asynchronous Multitasking

The first technique we’ll look at will use the MicroPython asyncio package to provide us with an asynchronous multitasking environment. This allows us to use a single core but to have multiple code tasks running in parallel.

We basically create an individual task for a particular job that our microcontroller has to carry out. For example, our Web server code would be one task and the control of our circuit components would be another task.

Once we create these two tasks the asyncio scheduler will switch the processor core from running one task to the other repeatedly so that each task appears to be executing at the same time. In this way when our Web server is waiting for the next HTTP connection the scheduler will allow our other tasks to run while our Web server task is stuck in a holding loop.

Setting up the tasks in MicroPython using asyncio is relatively straightforward. We simply have to create asynchronous functions for each of the tasks we want to run.

async def myTask():

Here the async keyword defines this function as a coroutine. Coroutines are simply Python functions but structured so that they can operate inside our multitasking environment. The only real difference we need to concern ourselves with is that at some point each coroutine must call an awaitable function.

Awaitable functions pause the current task and allow other tasks that are waiting for processor time to run. The awaitable function will carry out some software operations and once those are complete it will put the paused task back on the execution event loop. When the scheduler is next able to run this task it will pick up from where it left off after the execution of the awaitable function.

The full code for this tutorial is available for download through my GitHub repository.

https://github.com/getis/micropython-web-control-panel

If you look in the file api_async.py you’ll see the full listing for the asyncio powered Web server.

At the bottom of the listing you’ll see the code that sets up the asynchronous multitasking. We first need to run a main task and attach it to a new event loop. This main task is simply a coroutine which will set up and start any other parallel tasks that we need to run.

If we look inside the main function we’ll see the following code

# main coroutine to boot async tasks

async def main():

# start web server task

print('Setting up webserver...')

server = uasyncio.start_server(handle_request, "0.0.0.0", 80)

uasyncio.create_task(server)

# start top 4 neopixel updating task

uasyncio.create_task(neopixels())

# main task control loop pulses red led

counter = 0

while True:

if counter % 1000 == 0:

IoHandler.toggle_red_led()

counter += 1

# 0 second pause to allow other tasks to run

await uasyncio.sleep(0)

The asyncio package was designed to handle functions such as HTTP requests and it actually has an inbuilt Web server package that we can use to asynchronously handle our Wi-Fi connection. We simply need to create a request handler function that will receive the connection and process it.

We can then create other asynchronous tasks by wrapping the code in further coroutines and then starting those as new tasks.

Finally, our main function also represents a task so we can actually perform some control logic within this function which will again run in parallel with any of the other tasks we’ve defined.

The important part of ensuring this function operates as a coroutine is the await line.

The await command tells this task to run the following operation as an awaitable coroutine. As we said earlier this will pause this task and allow the other tasks in the queue to get some runtime. In this instance we are using a special version of the sleep function and telling this task to sleep for zero seconds. This will effectively stop this task, allow other tasks to start, but put this task straight back onto the end of the execution queue ready to restart.

The Neopixel Handler

If we now look at the neopixel handler task we can see that it very much follows the same format. We have a main control loop that cycles the colours of the RGB LEDs but each time we run through the loop we again call an awaitable coroutine that allows other tasks to gain access to the processor.

If we didn’t have any await calls then this task would simply hog the processor once it gain control and not release control back to any other tasks. So when we create our coroutine tasks we must make sure that we build in mechanisms to allow other tasks to take control.

The Asynchronous Web Server

Looking now at the handle_request function we can see that it is a slightly modified version of our previous Web server code. We simply replaced a few of our function calls with these await instructions. At the start of our request we need to get hold of the next HTTP request message. We already know that there may be a delay before this arrives so we wrap up our read request inside this new coroutine.

raw_request = await reader.read(2048)

Here our task will still be monitoring for the HTTP request arriving but will not block the processor, allowing other tasks to run. When an HTTP request is received the coroutine will collect the data and return our Web server task to the task queue. Eventually our Web server task will get to the top of the queue and execution will continue.

If you then look at the bottom of the function you’ll see more await instructions wrapping the final sending of the response message back to the client. Again this is another process which takes an amount of time to complete as the data is sent over the Wi-Fi channel so we again execute this in a way that doesn’t block the rest of the processor tasks while this data is being sent out.

Multicore Processing

Quite a few microcontrollers such as the Raspberry Pi Pico have more than one processor core. This provides us with an alternative method of running our Web server in the background while our main control loop processes.

The code for this demonstration is in the api_threaded.py file.

Here we are using exactly the same code as we used in the previous tutorial, including the blocking functions when we wait for the next HTTP request. But now we divide our code into two main functions. One handles the actual Web server and one handles our time critical control software.

If you look at the end of this file you’ll see the code that sends one of our functions to the second processor core using the line

second_thread = _thread.start_new_thread(main_loop, ())

This then leaves our first processor core free to run the Web server software.

Note: I found that the Web server software was very unreliable when running on the second core (Core 1).

For more information and a full tutorial on using multiple cores in MicroPython please have a look at my previous tutorial – Dual Core Processing in Micropython

Combining Multitasking and Multithreading

Using both cores, but with one running the blocking version of a Web server means that one of our cause will mostly be sitting doing nothing while it waits for the next HTTP response. We saw in our first technique that we can get around these blocking functions when using a single core by using asynchronous coding.

So our final version of our Web server will combine both techniques so that we have one processor core running part of our control software at full speed, with the second core running an asynchronous Web server with a number of other asynchronous tasks that can handle other parts of our project.

It’s worth noting at this point that you do need to choose carefully which parts of your project run as tasks in which processor core.

The asynchronous core isn’t truly running each task in parallel. Each task is being run in turn and must wait for other tasks to release control before it can run for a short while after which it to must release control. Depending on how long it takes from releasing control to getting your next slice of runtime will dictate how long a particular task is paused. If this pause time become significant it can upset time critical operations. So do bear this in mind when you decide where to place particular blocks of code in your multitasking and multi core system.

What Next?

We now have a fully working, nonblocking Web server that can respond to HTTP requests for static files, and can respond to a REST API.

All we need now is a web-based control panel that will allow us to communicate, monitor and control our project. We’ll cover this in the next tutorial.